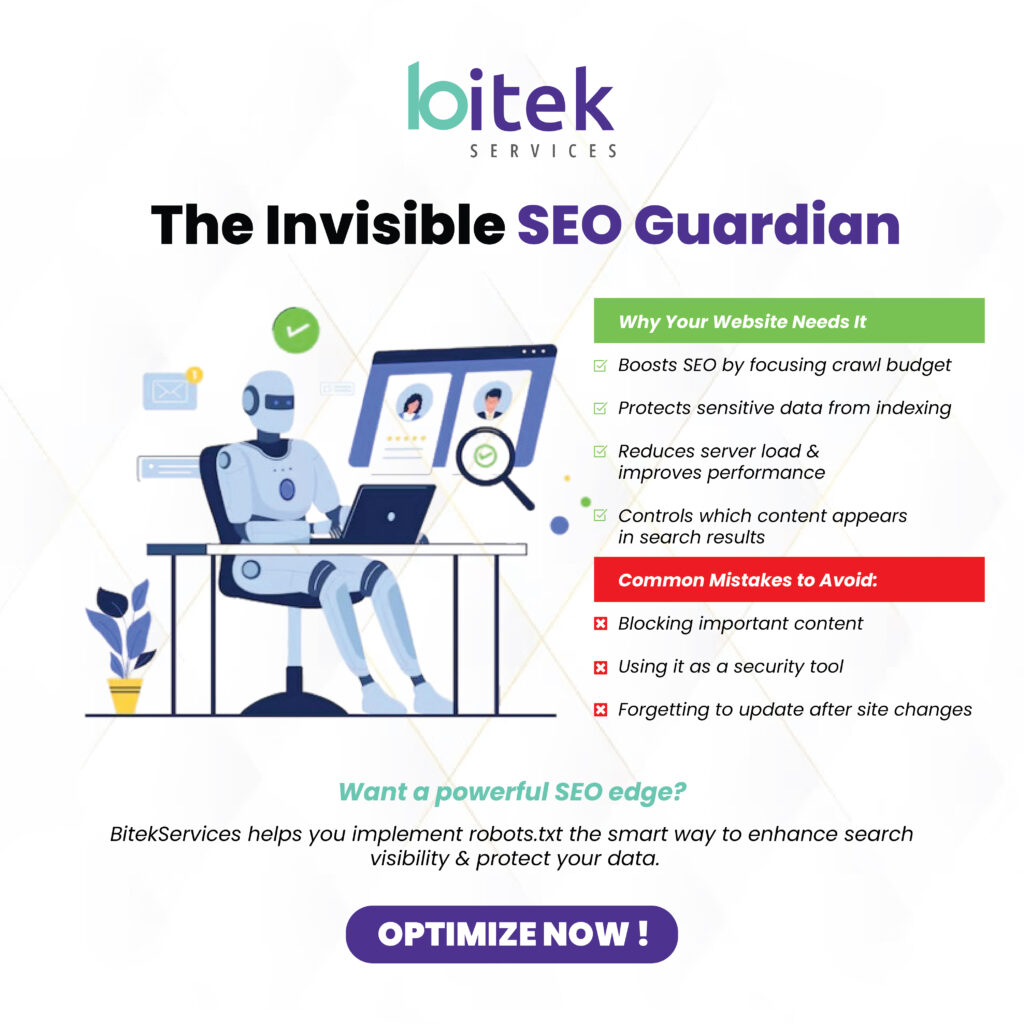

Hidden in the background of every well-optimized website lies a small but powerful file that most business owners have never heard of: the robots.txt file. This unassuming text document serves as a digital traffic controller, guiding search engines through your website while protecting sensitive areas from unwanted attention.

At BitekServices, we’ve helped hundreds of businesses optimize their online presence, and we consistently find that proper robots.txt implementation can dramatically improve search engine rankings while protecting valuable business information. Today, we’ll demystify this essential SEO tool and show you how it can transform your website’s search performance.

What Exactly is a Robots.txt File?

A robots.txt file is a simple text document that lives in your website’s root directory and communicates directly with search engine crawlers (also called “robots” or “bots”). Think of it as a set of polite instructions that tell search engines which parts of your website they should explore and which areas they should avoid.

When Google, Bing, or other search engines visit your website, the first thing they do is check for a robots.txt file. This file provides a roadmap that helps search engines understand your site structure and crawl your content more efficiently.

The Technical Foundation

The robots.txt file follows a specific protocol called the Robots Exclusion Standard. This standardized format ensures that all major search engines interpret your instructions consistently, regardless of which search platform is crawling your site.

The file uses simple commands written in plain text, making it accessible to both search engines and humans. Despite its simplicity, this small file can have enormous impact on your website’s search engine optimization and overall online security.

Why Every Business Website Needs a Robots.txt File

Search Engine Optimization Benefits

A properly configured robots.txt file helps search engines crawl your website more efficiently. By directing crawlers away from unimportant pages (like admin areas, duplicate content, or temporary pages), you ensure that search engines spend their time indexing your most valuable content.

Search engines have limited “crawl budget” for each website. By using robots.txt to focus their attention on your important pages, you can improve your chances of having your best content indexed and ranked highly in search results.

Website Security and Privacy Protection

Robots.txt files can prevent search engines from indexing sensitive areas of your website, such as admin panels, private documents, or staging environments. While this isn’t a security measure by itself, it adds an important layer of privacy protection for business-critical information.

Bandwidth and Server Resource Management

Preventing unnecessary crawling of irrelevant pages reduces server load and bandwidth usage. This is particularly important for businesses with large websites or limited hosting resources, as it helps maintain optimal site performance.

Brand Protection and Content Control

By controlling which content appears in search results, you can better manage your online brand presence. This prevents outdated, incomplete, or sensitive information from appearing in search engine results where potential customers might see it.

Common Robots.txt Use Cases for Businesses

E-commerce Websites

Online retailers often use robots.txt to prevent search engines from indexing shopping cart pages, checkout processes, and customer account areas. They might also block crawling of filtered search results that create duplicate content issues.

For example, an e-commerce site might allow crawling of product pages while blocking access to comparison pages or internal search results that don’t provide value to search engines.

Professional Service Businesses

Law firms, medical practices, and consulting companies often use robots.txt to protect client portals, internal documents, and work-in-progress pages from appearing in search results.

These businesses might also block crawling of staff directories or internal communication systems while ensuring their main service pages receive full search engine attention.

Manufacturing and B2B Companies

Industrial businesses frequently use robots.txt to protect proprietary information, dealer portals, and technical documentation from public search results while promoting their main product and service pages.

Content Management and Blog Optimization

Businesses with active blogs use robots.txt to prevent crawling of draft posts, author pages, and tag archives that might create duplicate content issues. This helps focus search engine attention on published, high-quality content.

Understanding Robots.txt Syntax and Commands

Basic Command Structure

Robots.txt files use simple commands that specify which user agents (search engine bots) the rules apply to and which directories or files should be allowed or disallowed.

The most common commands include:

- User-agent: Specifies which search engine crawler the rules apply to

- Disallow: Tells crawlers not to access specific files or directories

- Allow: Explicitly permits access to files or directories (useful for exceptions)

- Sitemap: Provides the location of your XML sitemap file

Practical Examples

A basic robots.txt file might look like this:

User-agent: * Disallow: /admin/ Disallow: /private/ Allow: / Sitemap: https://yourwebsite.com/sitemap.xml

This example tells all search engines to avoid the admin and private directories while allowing access to everything else, and provides the location of the sitemap.

Advanced Configurations

More sophisticated websites might have different rules for different search engines. For instance, you might allow Google to crawl certain areas while restricting other bots:

User-agent: Googlebot Disallow: /temp/ Allow: / User-agent: * Disallow: /

This configuration allows Google full access while blocking all other crawlers entirely.

Common Robots.txt Mistakes That Hurt SEO

Blocking Important Content

The most damaging mistake is accidentally blocking pages you want search engines to find. We’ve seen businesses block their entire website or important sections due to incorrect robots.txt configuration.

Over-Restricting Search Engine Access

Some businesses become overly cautious and block too much content, limiting their search engine visibility. The goal is to guide search engines, not prevent them from finding your valuable content.

Forgetting to Update After Website Changes

When websites are redesigned or restructured, robots.txt files often aren’t updated accordingly. This can lead to blocked access to new important sections or continued blocking of areas that should now be accessible.

Using Robots.txt as a Security Measure

A critical misconception is treating robots.txt as a security tool. The file is publicly accessible and doesn’t actually prevent access—it simply requests that search engines avoid certain areas. Sensitive content should be protected through proper security measures, not just robots.txt.

Creating and Implementing Your Robots.txt File

Planning Your Robots.txt Strategy

Before creating the file, audit your website to identify which areas should be crawled and which should be avoided. Consider your business goals, content strategy, and privacy requirements.

Map out your website structure and identify directories containing admin functions, duplicate content, staging areas, or sensitive information that shouldn’t appear in search results.

File Creation and Formatting

Create a simple text file named “robots.txt” (all lowercase) using any text editor. Ensure the file uses UTF-8 encoding and contains no special formatting or hidden characters that might cause parsing errors.

Testing and Validation

Google Search Console provides a robots.txt tester that allows you to verify your file works correctly. This tool shows exactly how Google interprets your robots.txt file and identifies any syntax errors.

Deployment and Location

Upload the robots.txt file to your website’s root directory. The file must be accessible at yourwebsite.com/robots.txt for search engines to find and use it.

Advanced Robots.txt Strategies

Sitemap Integration

Including your XML sitemap location in your robots.txt file helps search engines discover and crawl your content more efficiently. This is particularly valuable for new websites or those with complex structures.

Crawl Delay Management

Some search engines respect crawl delay instructions in robots.txt files. This can help manage server load during peak traffic periods, though it should be used carefully to avoid slowing legitimate crawling.

Handling Multiple Subdomains

Large businesses with multiple subdomains need separate robots.txt files for each subdomain. Develop a consistent strategy that aligns with your overall SEO objectives across all domains.

International and Multi-language Sites

Businesses serving multiple countries or languages need careful robots.txt planning to ensure proper crawling of all relevant content while avoiding duplicate content issues.

Monitoring and Maintaining Your Robots.txt File

Regular Auditing

Schedule quarterly reviews of your robots.txt file to ensure it remains aligned with your website structure and business goals. Website changes, new sections, or updated privacy requirements may necessitate robots.txt modifications.

Performance Monitoring

Use Google Search Console and other SEO tools to monitor how search engines interact with your robots.txt file. Look for crawl errors or blocked pages that should be accessible.

Documentation and Change Management

Maintain documentation of your robots.txt configuration and the reasoning behind specific rules. This helps ensure continuity when team members change or when making future updates.

Integration with Overall SEO Strategy

Your robots.txt file should work in harmony with other SEO elements like sitemaps, internal linking, and content strategy. Regular reviews ensure all these elements support your search engine optimization goals.

The Business Impact of Proper Robots.txt Implementation

Improved Search Rankings

Businesses that implement strategic robots.txt files often see improved search rankings as search engines can focus their crawling efforts on the most important content. This concentrated attention often results in better indexing of key pages.

Enhanced Website Performance

Reducing unnecessary crawler traffic can improve website performance, particularly for smaller businesses with limited hosting resources. Faster websites provide better user experiences and often rank higher in search results.

Better Analytics and Insights

Cleaner crawling patterns result in more accurate analytics data, helping businesses make better decisions about their online strategy and content development.

Competitive Advantages

Many businesses neglect robots.txt optimization, creating opportunities for companies that implement these files strategically. Proper implementation can provide significant competitive advantages in search engine visibility.

Industry-Specific Robots.txt Considerations

Healthcare and Legal Compliance

Healthcare practices and legal firms must be particularly careful about which content appears in search results. Robots.txt helps ensure patient information, case details, and confidential documents remain private.

E-commerce Product Management

Online retailers need sophisticated robots.txt strategies to handle seasonal products, discontinued items, and inventory management pages without creating SEO problems.

Service-Based Businesses

Professional service companies often use robots.txt to promote service pages while hiding internal tools, client portals, and project management systems.

Educational and Non-Profit Organizations

Schools and non-profits frequently need to balance public information sharing with protecting student or donor privacy through strategic robots.txt implementation.

Future-Proofing Your Robots.txt Strategy

Emerging Search Technologies

As search engines evolve and new crawling technologies emerge, robots.txt files must adapt accordingly. Stay informed about changes to search engine guidelines and update your files as needed.

Mobile and Voice Search Considerations

The growing importance of mobile and voice search may require adjustments to robots.txt strategies. Ensure your file supports optimal crawling for all types of search experiences.

AI and Machine Learning Impact

Search engines are increasingly using artificial intelligence to understand websites. Your robots.txt strategy should support these advanced crawling and understanding capabilities.

Privacy and Compliance Evolution

Changing privacy regulations and compliance requirements may necessitate robots.txt updates. Regular reviews ensure continued compliance with evolving legal requirements.

Getting Professional Help with Robots.txt Implementation

When to Seek Expert Assistance

While basic robots.txt files are relatively simple, complex websites or businesses with specific compliance requirements often benefit from professional assistance. Signs you might need expert help include frequent crawl errors, declining search visibility, or complex multi-domain structures.

The Value of Professional SEO Services

Experienced SEO professionals understand the nuances of robots.txt implementation and can integrate these files into comprehensive search engine optimization strategies that deliver measurable business results.

Ongoing Management and Support

Robots.txt files require ongoing maintenance and updates as websites evolve. Professional SEO services can provide continuous monitoring and optimization to ensure optimal performance.

BitekServices: Your Robots.txt and SEO Partner

At BitekServices, we understand that effective robots.txt implementation requires both technical expertise and strategic business thinking. Our approach combines deep technical knowledge with understanding of your business goals and industry requirements.

Comprehensive Website Analysis

We begin every robots.txt project with thorough website analysis, identifying opportunities for improved search engine crawling while protecting sensitive business information.

Custom Implementation Strategy

Every business is unique, and we develop robots.txt strategies tailored to your specific website structure, business goals, and industry requirements.

Ongoing Monitoring and Optimization

Our relationship with clients extends beyond initial implementation. We provide continuous monitoring and optimization to ensure your robots.txt file continues supporting your SEO goals as your business grows.

Integration with Complete SEO Services

Robots.txt implementation works best as part of a comprehensive SEO strategy. We integrate robots.txt optimization with content strategy, technical SEO, and other optimization efforts for maximum impact.

Take Control of Your Website’s Search Engine Interaction

Your robots.txt file might be invisible to website visitors, but its impact on your search engine success is undeniable. This small file can be the difference between search engines finding and ranking your most important content or wasting time on irrelevant pages.

Don’t let search engines navigate your website blindly. A properly configured robots.txt file guides them directly to your most valuable content while protecting sensitive information from public view.

The investment in proper robots.txt implementation is minimal, but the potential returns in improved search rankings, enhanced security, and better website performance can be substantial.

Ready to optimize your website’s robots.txt file and improve your search engine performance? BitekServices specializes in technical SEO implementations that deliver measurable results. Contact us today to schedule a comprehensive website analysis and discover how proper robots.txt configuration can enhance your online visibility.

Your competitors might be neglecting this crucial SEO element—don’t let that advantage slip away. Take action today and give your website the search engine guidance it deserves.